How are AI agents going to use our software? That question went from philosophical to very concrete for us. In a team discussion about an agent-first future, we half-jokingly decided we need to expand from UX to AX (agent experience): Optimize our product so agents want to use us. When we later launched our MCP server to better support AI workflows, we realized we had no idea what was even happening inside it.

So, what do agents experience when using our product? We wouldn’t know!

APIs, AI and AX

My team and I run an API-first product. AI has empowered many people to use us in their workflows. But honestly, LLMs really suck at writing API requests if they just run with the API docs. We can see it in the number of support requests featuring hallucinated parameter names and verbose request structures that have nothing to do with our specs.

That’s frustrating for everyone involved: For our users, for me in customer support and probably for the agent as well.

To make our creative automation API easier to use with AI, we added an MCP integration. It provides pre-built tools that agents can use to reliably generate deterministic images, PDFs and videos from templates. AI adds the capabilities it excels in: Take natural language prompts, parse intent and decide what to fill into the dynamic layers before rendering.

Our MCP server setup

The placid.app MCP integration provides a hosted MCP server that users can configure to include only the tools they need (to optimize context size). It’s Laravel-based, using the standard MCP protocol over HTTP. Agents can use tools to search through the user’s templates, understand their layers and trigger renders.

After launching, we loved it and early adopting users loved it – but did agents love it? They’re not giving any feedback (yet). And we didn’t know anything about usage or performance:

- Which tools are they using?

- Were tool calls failing? How often?

- Are they fast enough?

To optimize AX (and ultimately also optimize for token efficiency), these infos would be valuable.

The problem: MCP is a black box

Unfortunately, the standard MCP tooling doesn't give you any structured visibility into runtime behavior. The server handles JSON-RPC requests from agents, but there is no built-in logging for tool calls, success rates or latency.

Since we’re using Sentry’s application monitoring for everything else, it also tracked our HTTP layer, but the MCP tool calls were invisible inside those transactions. Every request just looked the same - even more so since our MCP server routes directly to our existing REST API.

(Disclaimer: Sentry is sponsoring madewithlaravel.com, but we have been using it forever!)

The solution: A custom MCP tracing middleware

To better understand what’s happening in our MCP, we looked at Sentry’s AI and MCP monitoring tools. At the time of us implementing it, they offered MCP SDK integrations for Python and JavaScript.

These integrations automatically capture spans at each MCP interaction (like a tool call). Think of spans as a structured log entry with a start time, duration and metadata such as inputs, outputs and exceptions. The results are shown in a dedicated MCP dashboard reporting on server activities, performance metrics and tool calls.

Sounds great - now for Laravel please?

Bridging the gap for Laravel MCP observability with Sentry

There was no native PHP MCP integration for Sentry yet, but we didn’t want to wait. Our solution was to write a Laravel middleware that creates the spans for each MCP operation manually, following Sentry’s MCP monitoring conventions.

What are we tracking

Per span, our middleware should track:

- The MCP method (

tool/call,resources/read,prompts/get) - The specific tool, resource or prompt called

- Success or failure (via JSON-RPC error detection)

- Metadata of the tool result (error flags, content count)

- The user making the request

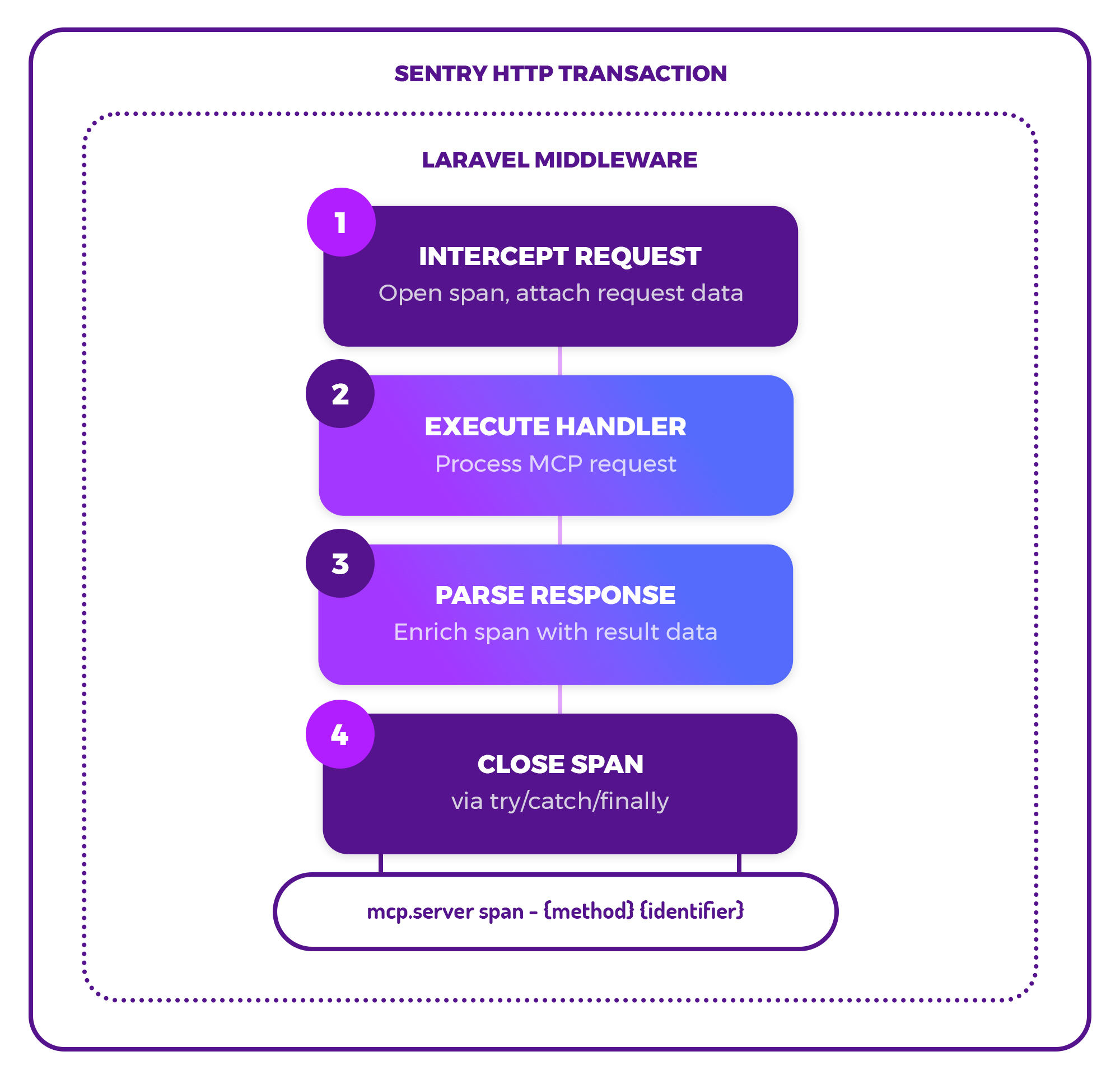

1️⃣ - Intercept the request & create a span

To achieve that, our middleware intercepts the MCP request before our server handles it, and opens a span, attaching the request-level data we already have. Sentry wraps each HTTP request in a so-called transaction – our middleware adds the MCP-level spans as children inside of those.

With this approach, we don’t trace agent workflows across multiple steps, but stay at the tool call level by design. For us, that’s a practical approach to better debug failures for individual image / PDF / video renders.

2️⃣ - Execute the handler

The MCP handler is then executed to process the request, and we wait for the response.

3️⃣ - Parse the response & add data to the span

The response (regular or streamed) is parsed to extract and attach the rest of the metadata we need to the span.

4️⃣ - Close the span

Finally, the span needs to be closed. That needs to happen even if an exception occurs, so we’re using try/catch/finally blocks.

So far so good – that’s how it works conceptually. To take a deeper look at exactly how the code works, you can peek at it in this gist!

What we can see now

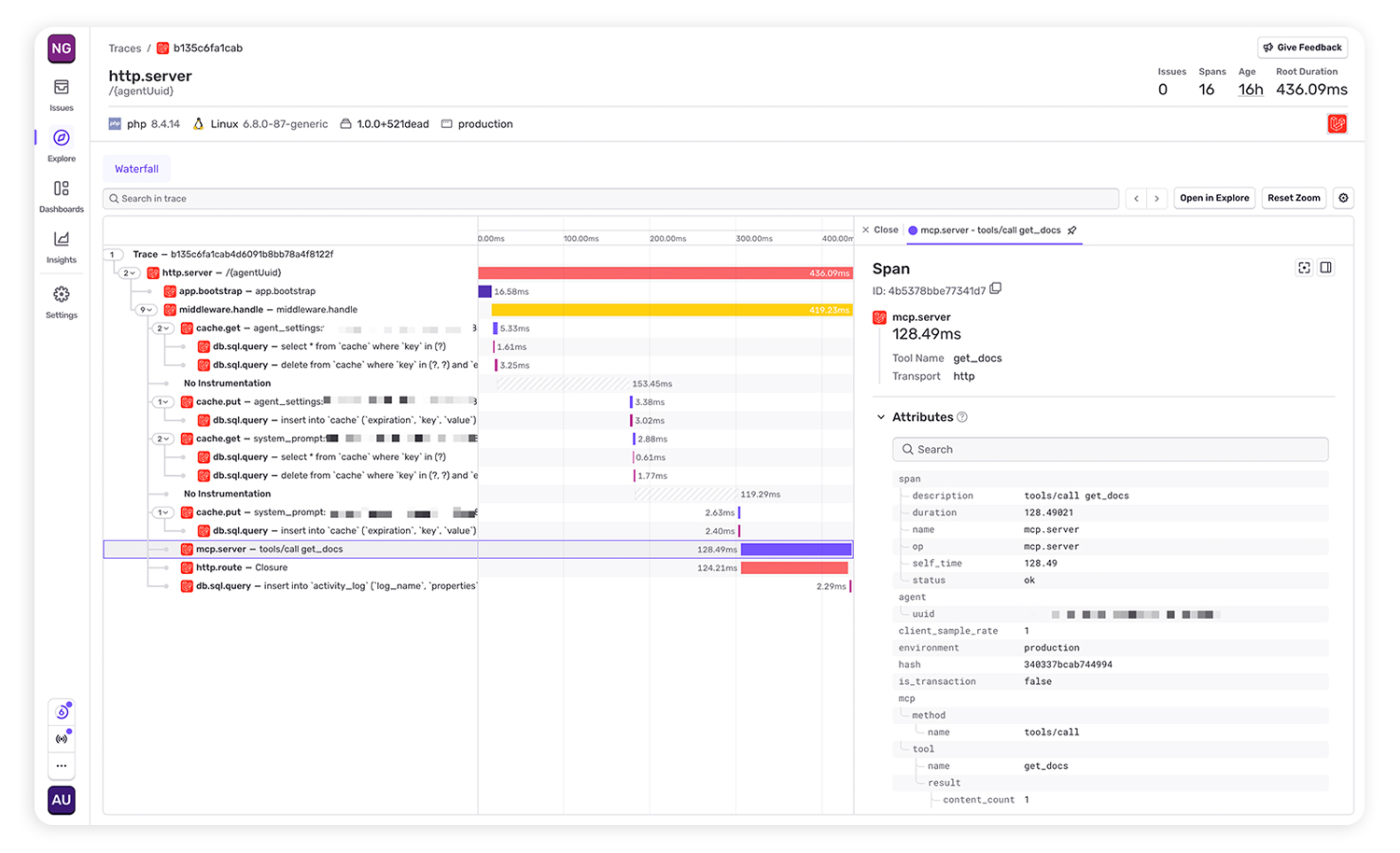

Once implemented, the same transactions in Sentry showed up with mcp.server spans showing tool names, durations and status.

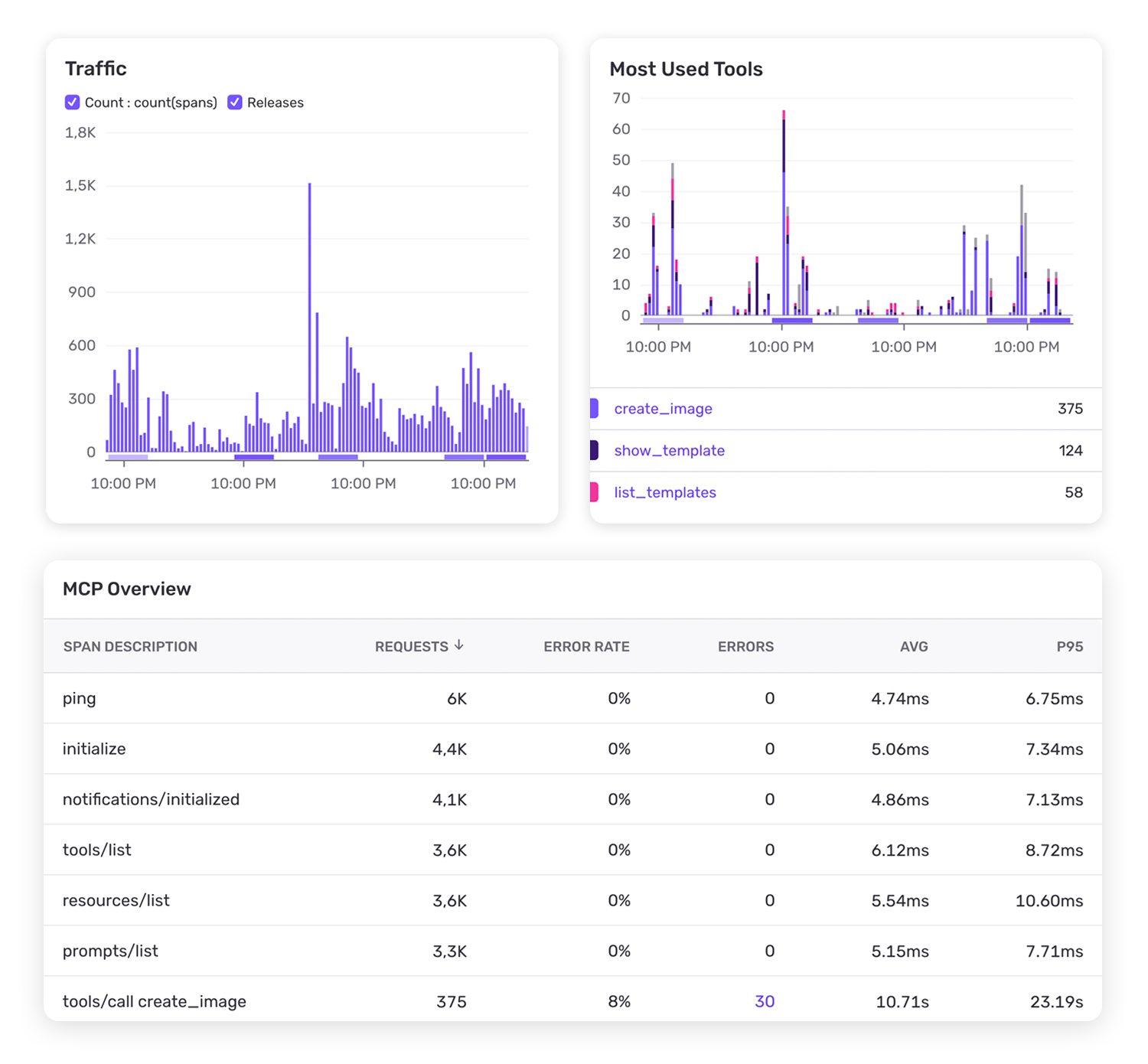

Now we get a better picture of MCP usage and have reporting around:

- 📦️ Which MCP tools are called most (and which are never used)

- 🤖 Which clients and projects generate the most traffic

- 💥 Errors & error rates per tool

- ⏱️ Latency per tool call

- 🐌 Slow tool calls that need optimization

That’s a solid set of data that will help us improve reliability, performance and token efficiency over time. Will that make us popular among AI agents as a target group? Will they recommend us because of great AX? We’re not sure yet.

Build first, optimize always

Monitoring and optimization is great – but rarely our first thought when building something. I think we all need to keep building with and for AI as the ecosystem evolves. But at the end of the day, we’re business owners, too. We need answers to these questions. Observability is the boring part (sorry, monitoring enthusiasts!), but it delivers some of those answers. It turns a black box like an MCP into insights that help us craft good experiences for humans and agents.

As a developer, I am always happy to see that the tools I trust already went 95% of the hard way for me in their dedicated domain, as I try to do in mine. The Laravel middleware was straightforward to build, and Sentry already had everything else in place.

Feel free to check out and use our PHP / Laravel middleware SentryMcpTracing.php for MCP observability with Sentry, until an official integration drops 👀